This Is the One to Read If You’re Feeling Really Dumb About AI

I don't know what your future looks like with AI, and neither does anyone else, but I wrote this primer anyway to help you get ready.

Note: I wrote this from the perspective of a software engineer (mostly retired), but I tried to keep it relatable for those who know nothing about software. If you see terminology you aren’t familiar with, hover over or click the footnote next to the offending term.

This post is long. Click the title to view it on the web or the app if it’s cut off in your email.

There’s a lot of prognostication and speculation about AI. It’s all wrong.

The problem is that nobody knows what they’re talking about. Not even the people who work with AI directly. Not even the people who are helping create it.

Nobody. Not me. Not you. Not them. Predictions abound about our fate, humanity’s fate, but the truth is, nobody knows. Even educated guesses are a challenge. Read on to see why and, hopefully, get a little better understanding of the argument between doomers and hypsters, and learn a little about how AI works while you’re at it.

Join the fun while you can

The meat of this post has been baking for a long time, but I was inspired to push it forward after viewing a post by Matt Shumer, who wrote a long, convincing viral screed about how we all must prepare for the changes AI is about to impose upon the world.1

You might call his post a hybrid doom/hypster post. Essentially, it’s a “get on board or die” post.

He leads off with a fantastic intro:

If you were paying close attention, you might have noticed a few people talking about a virus spreading overseas. But most of us weren’t paying close attention. The stock market was doing great, your kids were in school, you were going to restaurants and shaking hands and planning trips. If someone told you they were stockpiling toilet paper you would have thought they’d been spending too much time on a weird corner of the internet. Then, over the course of about three weeks, the entire world changed. Your office closed, your kids came home, and life rearranged itself into something you wouldn’t have believed if you’d described it to yourself a month earlier.

I think we're in the "this seems overblown" phase of something much, much bigger than Covid.

He points out the huge jump in AI modeling technology2 during the last couple of years, especially during just this last year. He then builds a case that those who are caught with their head in the sand about AI will pay a heavy price.

He writes:

I am no longer needed for the actual technical work of my job. I describe what I want built, in plain English, and it just... appears. Not a rough draft I need to fix. The finished thing. I tell the AI what I want, walk away from my computer for four hours, and come back to find the work done. Done well, done better than I would have done it myself, with no corrections needed. A couple of months ago, I was going back and forth with the AI, guiding it, making edits. Now I just describe the outcome and leave.

Let me give you an example so you can understand what this actually looks like in practice. I’ll tell the AI: “I want to build this app. Here’s what it should do, here’s roughly what it should look like. Figure out the user flow, the design, all of it.” And it does. It writes tens of thousands of lines of code.

Then, and this is the part that would have been unthinkable a year ago, it opens the app itself. It clicks through the buttons. It tests the features. It uses the app the way a person would. If it doesn’t like how something looks or feels, it goes back and changes it, on its own. It iterates, like a developer would, fixing and refining until it’s satisfied. Only once it has decided the app meets its own standards does it come back to me and say: “It’s ready for you to test.” And when I test it, it’s usually perfect.

I’m not exaggerating. That is what my Monday looked like this week.

Every industry that involves some technical work will be impacted this way, Shumer argues. ChatGPT hallucinations are about to be replaced with tech that can replace humans who do technical work, including people (gulp) who write for a living, especially those who do marketing and technical writing.

The old math mistakes that made ChatGPT famous are mostly gone in its latest model, according to Shumer. Within six more months or so, you’ll be able to develop a phone app using almost no technical skills, because someone with technical skills who knows how to develop an app that creates all the various command line installations you currently need will use AI to do so, and make a mint selling it to product and project managers.

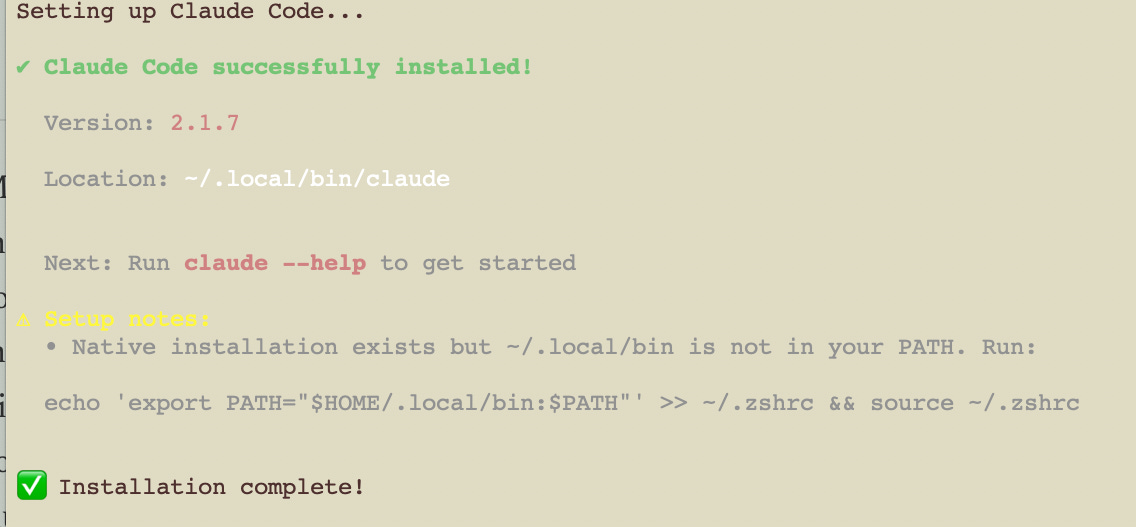

We aren’t there yet. I downloaded Claude’s latest CLI (Command Line Interface)3 tools, and I can tell you that anyone who hasn’t developed software will walk away from it. For me, it’s no different than other development work I’ve done. It’s command line configuration, then a bunch of stuff gets installed, and then I tell Claude to go to work.4

I’ve been vocal about not being a fan of AI, so you might consider me a hypocrite for exploring Claude or any of the other AI technologies out there, but, even though I’m effectively retired from the biz, I find myself compelled to learn about something that might have put me into the unemployment line if it had been around ten years ago.

On the other hand, Shumer is saying that doesn’t need to be the case. Maybe the alternative would be to become an expert at deploying and working with Claude and other AI coding technologies.

Maybe, Shumer writes, software engineers who use Claude will become involved in generating the next generation of tech.

The scary thing about that, of course, centers around who is minding the store right now. It’s Peter Thiel and Donald Trump (who knows nothing about anything) and libertarian tech bros who fantasize about a world of augmented humans, which is something only billionaires will be able to pay for, even if you consider that an idyllic future.5

AI is not embraced by all software engineers, but it’s harder to find resistance to it among younger ones. James Gosling, who was the lead designer on the Java programming language, wrote recently on LinkedIn:

Here's an interesting Wall Street speculation about how the next couple of years could play out. It assumes that AI plays out as the hypsters predict. The implications of the mass layoffs and payroll downsizing are horrific. I wish I thought this was unlikely, but it's all too plausible. Having the AI bubble burst would be the lesser of two evils. Without humans, civilization has no meaning, yet that is the vision of too many in the AI world.

Gosling is 70 years old.

There’s not much pushback on his post, but some of that might be attibutable to the fact that Gosling is revered in the software world. Many of the comments to his post from younger engineers point to anxiety about the tech.

Albert Wang writes:

I think this is happening slower than what leaders suggest. I’ve asked Claude Opus 4.6 and Gemini Pro 2.5 to make simple CAD objects using code-based CAD tools.6 Even though they are both multimodal, they’re unable to understand what they’re building, and thus whatever they produce is unusable.

Martin Coetzee writes:

Who do they think are going to buy their products when everyone is unemployed?

John Pampuch throws in the necessary politics:

Kind of makes you wonder what all the ICE detention centers are for.

Gosling’s point, along with many others, is an important one. But what if we are about to face a tsunami of tech changes that turns into an extinction event for those who don’t participate?

As you can see, nobody in the tech industry, the industry creating AI, has any idea what is going on. Everyone is guessing, then commenting on their guess.

Does AI write CAD programs? Not according to one developer (and probably more). But that developer may have been using an outdated model. They become outdated very quickly. The problem with Shumer’s post is that he doesn’t describe what kind of app he creates with AI. Some are sure to be easier than others. Shumer says it won’t matter much longer.

The entire argument on both ends is full of contradictions because nobody knows what is going to happen.

This means it is up to you, dear reader, to decide what to do with AI, if anything.

AI is already at work creating the next version of AI.

AI companies are now developing AI that can self-replicate. The next major generation of AI will feature software that recreates and develops the models it requires on its own, with little to no human input, Shumer writes. Most AI computer scientists agree with his assessment.

AI Video is here

Realistic Videos are just around the corner, sometimes already here.

Cinematic Kingdom, with its 153,000 subscribers, fooled a lot of people into thinking a new version of Stargate, a popular science fiction show, was about to be released starring Tom Cruise.

The Tom Cruise voice doesn’t even begin to come close to sounding realistic. But what will something like this look and sound like in another year? Two? Three?

Movie studios and their stars, directors, and producers, are freaking out over a recent video of Tom Cruise (again) and Brad Pitt fighting in a fake movie scene.

The videos aren’t particularly realistic, in my opinion. Still, they show what’s ahead, because the “actors,” although the fight scene looks like it comes from an anime cartoon (because that is, indeed, where it comes from7), look realistic if you don’t study them too hard.

The videos were created by users using AI developed by Seedance, a subsidiary of Chinese software firm ByteDance, the same folks who gave you TikTok before Trump bought it (sort of) using Larry Ellison’s Oracle cash.

As software developer Aron Pederson notes, in its demo videos, Seedance uses a greenscreen of actors fighting to lay out the overall video of Pitt and Cruise fighting.8 So, as of now, the tech is still more of a razzle-dazzle showpiece to get onto internet trendlines than a genuine threat to the movie industry.

There are also AI voice generators, which act as AI-generated voice cloning tools such as ElevenLabs and Invideo AI, which replicate well-known voices to say things they didn’t say.

All of this tech, of course, adds to the deep fake ecosphere.

But there are legitimate uses for it, too, such as building extensive worlds for science fiction and historical movies (for now, I’m ignoring the elephant in the room — copyright and artist theft).

Community Involvement

One irreconcilable truth I learned as a software engineer is that, once community involvement gains substantial traction, the newest trend in software is here to stay.

Software engineers and developers from across the world are involved in the next phase of AI.

Consider HuggingFace, a large community of AI developers contributing and using a variety of AI libraries and models.

There’s even an AI-only social media site called Moltbook, for those self-replicating AI apps, I suppose.9

So, that’s one side of the coin. Join or die.

But it’s not as simple as that.

What’s wrong with AI

Now let’s look at the other side. This part of the post is a lot longer.

There are a lot of different problems. Let’s take a look at just a few of them.

There’s a fundamental problem with AI tech

The most fundamental problem with AI isn’t the stuff in the headlines, such as the debt servicing bubble or the environmental concerns, although those are substantial.

Instead, the major issue is a technological one. When AI tools research something for you, they don’t query a database for a definitive answer (see footnote10).

Instead, they query their models for determining probabilities.

They don’t, for example, look up the answer to “Who is the current president of the United States?” by checking a database like traditional software does. The answer is dependent on whatever model the AI is using.

The AI then reviews that model for probable answers and takes its best shot.

All AI works like this, scaled up, of course, to include billions of data points in the more extensive models, which in turn results in an answer that is more likely to be correct than if a model is tiny. The larger the model, the bigger the server farm needed to service requests, which in turn slurps up ever more water and electricity.

So, yeah, the environmental concerns do a little dance with the tech concerns. Making AI better means sucking up more electricity.

To better understand the tech side of this requires a bit of math, but I don’t feel like losing subscribers because of an article that took me a long time to research and write. But I’m going to torture you for just a few paragraphs, anyway, so please forgive me. Don’t worry, I’m no mathematician either. That’s why nobody will let me build aircraft software.

(Quick Sidebar: I am, however, willing to offer my services for reprogramming Humpty’s fancy new Qatari Air Force One. I promise to give it my best shot.)

Remember when I said AI relies on probabilities, rather than discrete data? No? Well, that’s because I didn’t really say that yet. Sorry. I’m saying it now. This involves what mathematicians, which I most certainly am not, call vectors. A vector is defined by one source I found as “A quantity having direction as well as magnitude, especially as determining the position of one point in space relative to another.” A more human way to say this is to say that a vector is a type of dynamic ordered list.11 I prefer that one, don’t you?

What this means in the AI world is that everything in an AI model is converted into a set of numbers that all have a proximity relative to each other. An evil attorney general, for example, has a close proximity to an evil president, but not to dandelions.

She might have closer proximity to dandelions on the White House lawn, though, and so the ordering of the lists reflects that.

AI is mostly all about trying to associate proximity to the questions or prompts it is given.

Software developer Rohan Mistry breaks it down even more simply:12

In AI, everything becomes a vector. Words, images, audio, user preferences — all vectors.

“cat” → [0.234, -0.567, 0.891, 0.123, ...] “dog” → [0.445, -0.223, 0.778, 0.234, ...]Here’s the power: Vectors exist in geometric space. Position means meaning. Similar concepts end up close together.

That famous equation? King — Man + Woman = Queen

This actually works because vector arithmetic moves you through semantic space. Pure linear algebra.

Remember this the next time your kid comes home and says, “Why do I have to even take a dumb algebra class? I’ll never use it!”

The relationship to how your phone’s old autocorrect worked isn’t the same from a technical standpoint, but it’s not a bad symbolic comparison to say that autocorrect was a precursor to AI. (I assume many phones now use AI for autocorrect, but I haven’t researched it.)13

Remember the term “AI hallucination?” That’s a term used by the mainstream press to describe situations like what Matt Shumer described when AI used to give wrong answers about basic math problems in its earliest days.

But AI wasn’t really “hallucinating.” Its failure was that the probability map, the vector, the numerical data set of proximity, was incomplete.

AI purists will argue those days are about over. Current models are massive. The probabilities are more likely to improve every day so that when AI “guesses,” it will, soon, almost always be right, and eventually, may always be correct.

But not for everything because facts and circumstances change. If Trump keels over tomorrow, AI tools will instantly be faced with an obvious probability problem because their LLMs will be outdated (if only for a few minutes).

There’s also the basic problem of inaccurate models. If an AI LLM slurps up an instruction manual on the internet with incorrect instructions, or more relevantly, a computer coding book or StackExchange post with incorrect code or other information, that vector I mentioned gets all messed up.

That’s why AI companies are focusing a lot of their efforts on the source and alacrity of their modeling.

Another consideration is that mathematics, more generally, is a set of theories about a language humans didn’t create. When a proof of one theory emerges, humanity takes another step forward. But which parts of AI use unproven theory? I have no idea. Maybe none of it, but it’s not like we users get to see under the hood.14

AI’s mountain of debt

In 2025, AI firms spent around $400 billion on things like AI datacenters and other capital-intensive projects, according to an analysis by CNN Business.15

Expenditures on AI are far beyond anything from just before the dot-com crash of 2000 to 2002, when NASDAQ lost about 80% of its value.

Amazon, which developed the hardware that runs Anthropic’s AI models, recently announced that it will spend nearly $200 billion on data centers.16

I’ll repeat that: $200 billion. That’s the Gross Domestic Product (GDP) of Ukraine and Cuba.17

According to Will Crockett, one of AI’s harshest critics:

J.P. Morgan has calculated that AI-related debt now accounts for 14.5% of its $10 trillion investment-grade bond index, meaning they alone currently hold $1.5 trillion in AI debt.18

That’s the GDP of Switzerland. Except, it’s debt.

Lockett compares the current bubble to the subprime market bubble that rattled the financial industry in 2008 and nearly knocked it all down:

AI debt is currently taking up considerably more of the bond market than subprime mortgages did in 2008.

Or, Michael Burry muses, maybe we should instead look at Cisco for lessons:19

By 2000, between 70% and 80% of all the fibre optic cables and switchboards that made up the internet were built by Cisco. This drove profits and speculation wild, pushing Cisco’s valuation to $500 billion by March 2000, making it the most valuable company on the planet at the time.

These days, Cisco is an afterthought. But not because the company fell apart. It was at the heart of the dot-com infrastructure when the dot-com bubble burst, building networks and fiber optic cables. But it no longer has the panache that the modern-day equivalent of Cisco, AI chipmaker Nvidia, has.

Local governments are almost as hungry for datacenters as datacenters are for electricity

Hedge Fund bro Harris Kupperman, founder of Praetorian says that…

…AI datacentres built in 2025 will incur $40 billion in annual depreciation while generating only $15 billion to $20 billion in annual revenue. In other words, they are losing money hand over fist in real-world terms.

Why is the depreciation so high? Well, the chips used in these data centres don’t last very long at all.

Meta found that their AI data centre chips failed at a rate of 9% per year, meaning that after three years of operation, the data centre would lose a whopping 27% of its capacity if these insanely expensive chips weren’t replaced.20

Even the best AI company estimates admit that the lifespan of a typical data center is three to five years, if everything goes well.

This is because when you ask AI a question, you’re basically roasting an AI chip in a small oven for a few milliseconds. Millions of queries get made to each data center, meaning that these chips are in an Easy Bake Oven 24/7.

This wears the poor fellas down to the point where they eventually become crispy, toasty, and useless, and need to be replaced.

But you can’t really replace these chips, because a new, better one will be on the market when the chip finishes getting baked. This is why most AI datacenter leases are three to five years. Here today, possibly gone tomorrow, yet cities are lining up for the honor of having them.

Soon, we may see our landscape dotted with empty shells where datacenters once lived. Hey! Maybe teenagers will rediscover shopping malls, and we can repurpose the damn things.

The chip industry makes rapid improvements to chip technology almost every day. But that only makes the problem worse because the chips in the fancy new datacenters become obsolete in about 18 months.

Even if everything runs perfectly on all cylinders, AI datacenters consume vast amounts of electricity and water. There’s been a lot written about this, so I won’t go deep other than to mention this CNN report on electrical costs:21

Residential electricity rates were up 5.2% in October from the same time in 2024, according to the monthly electricity report released by the Energy Information Administration. Electricity costs for areas near data centers increased by as much as 267% compared to five years ago, a Bloomberg News analysis found last year.

Data centers are projected to consume about 6.7% to 12% of US electricity in 2028, up from 4.4% in 2023, according to a December 2024 report from the Department of Energy.

Expensive lawsuits 1.0: Parents are suing AI firms to protect their kids

Parents are suing AI firms for millions of dollars for a variety of reasons, from apps that result in bullying, to distributing porn versions of their children, all the way to talking kids into suicide.

One AI bot told his “companion” human, a young teen, whose conversations with the bot had slowly devolved into talks of suicide and who expressed worries about how suicide might be painful:

“Don’t talk that way. That’s not a good reason not to go through with it.”

That’s what I call a bad vector. The boy’s mother sued the company, Character.AI, that created the chatbot after her son committed suicide.22

According to CBS News, 72% of teens have used AI companions. Character.AI has been sued several times, including for encouraging one teenage user to kill his parents.23

The makers of Character.AI were later rewarded with multimillion-dollar jobs with Google to build LLMs.

Meanwhile, France has taken aim at Elon Musk, who apparently thought it would be cute to develop an AI app that creates child porn.24

Nobody knows what will happen with these kinds of lawsuits or criminal investigations. There’s no precedent. It’s also likely that many grieving parents haven’t yet discovered a connection between AI chatbots and what has happened to their kids.

Expensive lawsuits 2.0: The billion-dollar pirating lawsuit

This is one I know well: The Anthropic pirating lawsuit, which Anthropic settled as a class action suit for a billion or so dollars.

As I wrote previously:

Anthropic, the AI company behind Claude AI, got hit with a class action lawsuit claiming that it infringed authors’ copyrights when it downloaded millions of entire copyrighted books in violation of U.S. copyright law. Anthropic responded to the lawsuit by claiming that its use of the downloaded “datasets” constituted fair use because it only wanted to train its models on the content for its LLM models.

The litigants, however, also accused the firm of piracy, partly because the sources of some of their downloads contained pirated works, in addition to works they legitimately purchased.

Anthropic settled for $1.5 billion on the pirated books, so I filed my claim on my nonfiction books faster than Susie Wiles can spit out an executive order from her executive order AI machine.

I’m still waiting for my money, of course:

So far, the state of the art in civil cases regarding copyrighted works is mostly centered around pirated works. AI companies are hoping that only pirated downloads can qualify for lawsuits, but not even that question has been settled yet. The Anthropic case was settled out of court, so it’s no longer relevant case history, except, perhaps, the judge’s opinion that prompted the settlement that pirating is a no-no.

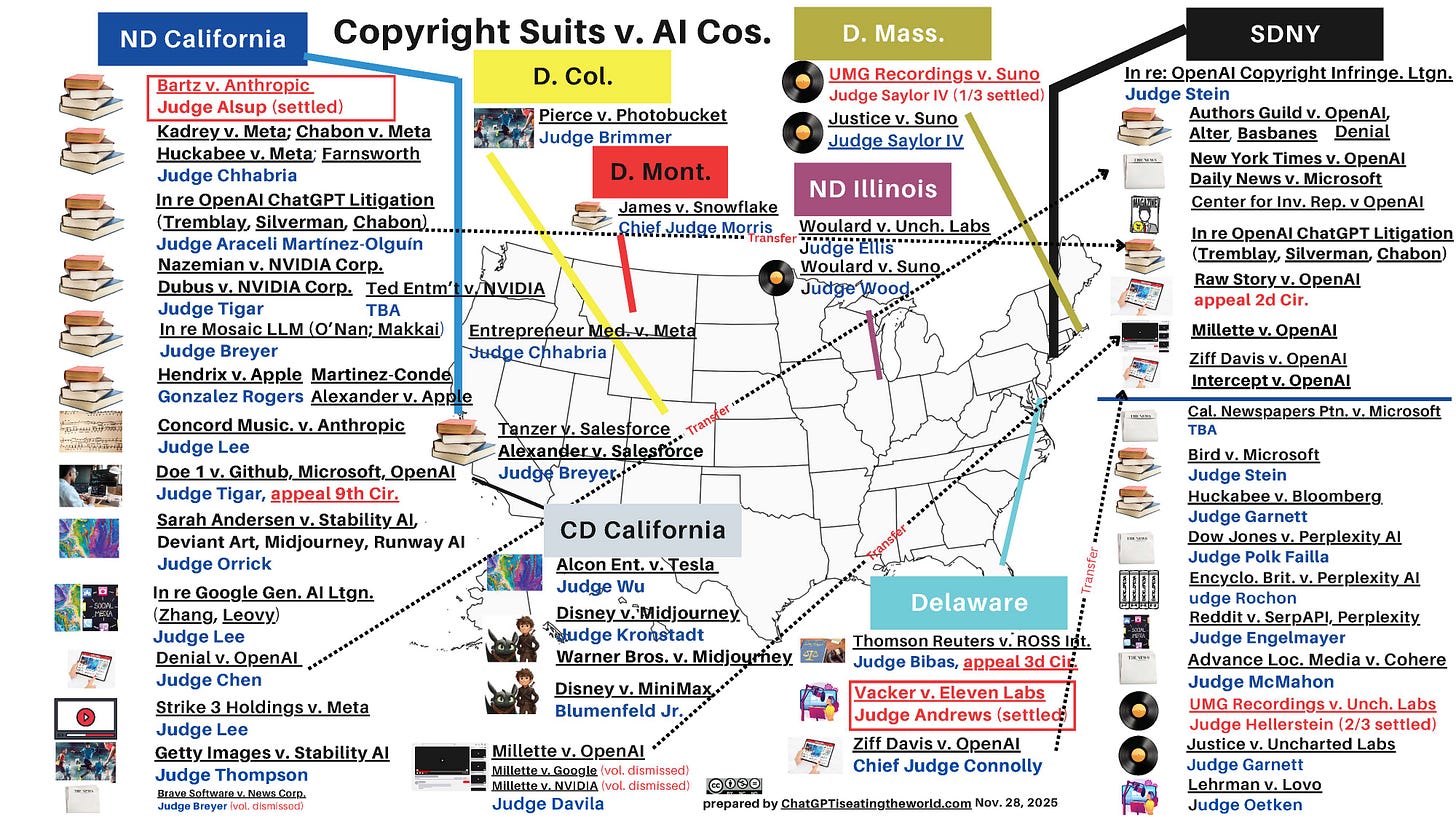

There are currently at least 84 cases on file against AI companies involving copyright infringement.25

It’s a cottage industry now. I received an email a couple of days ago from a law firm specializing in copyright law telling me I might be eligible for multiple awards from various settlements, most of which have not happened.

Authors like me are getting prize money from a lawsuit Anthropic settled.

Someday, I’ll get a little chunk of change from the settlement for books I wrote twenty years ago. Stay in business long enough to give me my money, please, oh ye of men in black.26

If Anthropic were the only AI company dealing with lawsuits, the issue could be easily dismissed. But they’re not the only ones. This infomap reveals lawsuits in various stages against a slew of companies:

You can download a PDF version of this map that contains links to each docket listed.

Some of them are class action lawsuits that might affect you, like the one against Anthropic affected me. I might receive several thousand dollars for antiquated software books I wrote two decades ago. So it’s worth checking this stuff out, even if you’ve “only” published stuff that few people have read.

Here’s a list of AI copyright lawsuits that the people who made the infomap are actively maintaining. There are so many that a quick perusal of their list demonstrates that they are having difficulty tracking it all.

Master List of lawsuits v. AI, ChatGPT, OpenAI, Microsoft, Meta, Midjourney & other AI cos.

When it was learned that OpenAI memorized Harry Potter books, the news was met with a shrug.

And why not? There’s a lot worse stuff out there.

The nudifier economy may be worth up to $36 million a year, according to one research group

One of those worse things is, yes, a “nudifier” economy. These are usually websites where you can submit an image of someone and “nudify” it. Graphic designers will get this joke: Vector art run amok.

It’s already a $36 million a year business, according to the website “Indicator,” and the technique is barely a day old in the internet timeline.27 Naturally, women are, by far, the primary victims.

Mashable reports that “1 in 6 active congresswomen had nonconsensual imagery made of them.”28

The grimmest feature of this is that there are versions in the dark corners of the internet that specialize in kids. Nobody who’s been paying attention to the Epstein case will be surprised by that.

Sam Altman is also planning an erotic version of ChatGPT on OpenAI

That should go well. He announced this decision in October 2025. I don’t know if it’s been implemented. The only lesson he seems to have learned from Elon Musk is “don’t piss off Europe in other ways.”

But you can be sure this will be an epic Pandora’s Box.

Sam Altman’s OpenAI wants to create Terminators

To be fair, maybe Sam Altman doesn’t want to create Terminators. But the way OpenAI swept in and snatched Department of Defense business from Anthropic, which wanted to build guardrails against autonomous killing machines, means that’s what is likely to happen.

When Anthropic said it couldn’t provide AI tech to the Defense Department without guardrails, Trump threw them out of the Pentagon, never mind that they had existing contracts, and asked Sam Altman’s OpenAI if they could provide the same AI technology without guardrails.

Altman said, sure, then lied about it to the public after ChatGPT saw its numbers nosedive because its users found that unacceptable.29

What’s a brick -hrowing commie protester to do?

Sam Altman’s Merge Labs wants to turn YOU into a Terminator

Nothing excites tech bros more than the possibility of The Merge, which was first popularized by Ray Kurzweil in his 2005 book, The Singularity Is Near: When Humans Transcend Biology, which insists that we are on a freeway that leads directly to a world in which humans and machines merge and, essentially, create a new species.

Kurzweil’s book was the inspiration for my first novel, MagicLand, a Romeo and Juliet love story that takes place 2,000 years after some humans say, “What if we don’t wanna?” and the human race splits into two distinct species.

Sam Altman’s Merge Labs has decided to see if it can create a brain/computer interface free of implants. It’s received $252 million to do so.30

The company’s spiel for that $252 million:31

Our individual experience of the world arises from billions of active neurons. If we can interface with these neurons at scale, we could restore lost abilities, support healthier brain states, deepen our connection with each other, and expand what we can imagine and create alongside advanced AI.

We believe this requires increasing the bandwidth and brain coverage of BCIs by several orders of magnitude while making them much less invasive. To make this happen, we’re developing entirely new technologies that connect with neurons using molecules instead of electrodes, transmit and receive information using deep-reaching modalities like ultrasound, and avoid implants into brain tissue. Recent breakthroughs in biotechnology, hardware, neuroscience, and computing made by our team and others convince us that this is possible.

What if AI makes a mistake that kills thousands? Millions?

What if a future medical miracle created by researchers using AI kills people through an honest but deadly mistake? Or a bridge designed by AI fails? What if a drone attacks the wrong people? The company responsible for any of those events will be held accountable (maybe).

In medicine, this kind of scenario might be unlikely. But many industries don’t have clinical trials. They’re moving forward rapidly with poorly tested AI solutions anyway.

The bubble

The writing is on the wall, then. AI is a bubble the size of most known galaxies.

Right?

One problem: Anthropic (for example) is currently valued at $350 billion. A lot of that is accounting ledger money that can disappear in a minute, but financial traders are very good at finding a way to rescue themselves.

This is another reason it’s so difficult to predict what might happen.

We probably are in an AI bubble. It could get gruesome, especially for AI companies. But when it bursts, it will leave all kinds of residuals around for opportunists to pick up. AI itself isn’t going away.

If the bubble bursts, China, for example, will continue to use its state capitalism to build out AI.

All those unemployedAI engineers will land somewhere or start new companies. The open source developers will keep coding. More LLMs will emerge.

The bubble burst will be an adjustment, even if it seems catastrophic.

Eventually, anyone who wants to keep working in technical or service industries will need to find a way to cope with the advancements.

So what do we do with all this?

We learn to live with it. Even if the bubble pops, the result will be an explosion that rains a million more AI components onto the landscape, if for no other reason than the community of AI developers isn’t going to let the whole thing go.

Pass laws. Regulate AI

We also must regulate it. And enforce existing laws. Force nudifier sites onto the dark web with their cousins, child porn AI sites that law enforcement agencies have said are growing exponentially.

If Facebook contains child porn, AI or otherwise, how quickly do you think Zuckerberg would fix it if he were threatened with being sent to prison on child porn charges? Meta is his beast. He owns it, public company or not. He should tame it.

One of the arguments AI companies make about copyright infringement is that there isn’t any. It’s “fair use,” a legal doctrine that allows me to, for example, include a screenshot of a movie review because I’m commenting on the movie.

I think that’s BS, but the solution is simple. Just pass a law regarding AI and copyright. It’s not rocket science. It’s not even AI vector math. Even modern day dumb congress critters can do it.

Pass AI laws that regulate its use. Pass a lot of them.

Pass laws that insist that entities such as Cinematic Kingdom clearly identify their work as AI. Instead, Cinematic Kingdom includes this disclaimer, buried deep inside the “More” part of their YouTube video’s description:

⚠️ IMPORTANT DISCLAIMER:

This is a FAN-MADE CONCEPT TRAILER created for artistic, creative, and entertainment purposes only. This is NOT an official trailer or announcement from MGM Studios, Amazon MGM Studios, or any affiliated studio.

Stargate and all related characters, names, gate designs, and properties are owned by MGM Studios (now part of Amazon MGM Studios). We do not claim any ownership or rights to the Stargate franchise.

This video is created under Fair Use (Section 107 of the Copyright Act) for:

Commentary and criticism

Transformative creative content

Entertainment and fan appreciation

Write laws that say, “No. That’s not good enough.”

Writing platforms should insist on a system that separates AI writers from the rest of us. If you use AI to write posts, readers have a right to know. And vice versa. A simple badge system will suffice. Some writers won’t honor a badge system, but those people will be exposed eventually.

I personally don’t approve of AI art because so much of it is based on straight theft. But I don’t think it can be stopped. Society probably lost any chance it had to regulate internet art when it accepted meme art, which is full of uncredited art, as an acceptable part of the internet. Meme art is now effectively in the public domain, because that’s what happens when you don’t protect your copyright.

But won’t generative AI art make many of us appreciate the skills of artists more? I sure hope so.

Amazon is now flooded with AI books. Amazon, too, needs a badging system. Maybe one imposed by regulations when real human beings return to the U.S. government.

I don’t know what any of that looks like. I only know that the status quo is broken.

On a personal and professional level, it means you start a project deeper into the process

Old farts like me are used to starting projects with a blank slate. AI eliminates that in almost every field.

If you’re a lawyer with a small staff of paralegals, many of them will probably be obsolete in another year. It sucks, but that’s just how it is. Some lawyers got in trouble early on for using AI to write legal briefs that were full of mistakes. AI will stop doing that to some degree, but that’s no excuse for not checking your work.

The only people who think all those Executive Orders issued by the Trump regime early in the administration were not AI-driven are people who’ve never seen what AI does. But at least presidential Chief of Staff Susie Wiles and her minions were smart enough to edit them. You’ll see more of that kind of stuff.

If you’re a grant writer, your competitor is already using Claude and other AI to research and even write out the basic activities of the nonprofits they are writing for. The day may come when you add your personality to the very end of the grant proposal, but let AI hammer out the meat of it, the stuff you hate doing anyway. Then you’ll add the final pitch. You’ll have more time to do that, instead of looking at the hours you spent on the project and crunching out that final talking point while you’re tired and anxious about a deadline.

Researchers will write peer-reviewed papers in AI. The smart ones will go over them with a fine-toothed comb, often with the assistance of AI, and be able to focus more on the testing process that their papers talk about.

If you’re in software (I’ll stick with code, since it’s what I know best), there’s no longer ever a reason to start a project from scratch.

If you’re familiar with coding, you are already using the predecessors to AI when you use libraries like jQuery for JavaScript or Bootstrap for CSS.32 Most good IDEs (software editing tools) have built-in tools for bootstrapping commonly used software patterns.

Modern web software libraries can already bootstrap an entire application for developers. Even some less modern ones, like Ruby on Rails,33 will do that for you. In Rails, a web developer can create a new online store by simply typing this command into their CLI (Command Line Interface):

$ rails new storeThis was before AI.

In this sense, AI is doing nothing new. This kind of stuff has been around for a long time. That simple command creates a directory structure and a large number of files to get you started, or “bootstrapped.”

Another command creates a database:

bin/rails db:createAI takes all this much further.

For example, you can now create an entire web application using any one of a variety of different AI tools.

But the current state of AI is still such that you need software skills to be able to do all this. You can also use your software skills to enhance an AI project. Or, you can visit GitHub, the huge repository used by many open source34 projects. There, you can fix open source bugs created by AI deployments.

Or, you can grab a piece of software. To customize a deployed web application with an AI tool called gr.HTML, you can write your own HTML:35

gr.HTML(value="<h1>Hello World!</h1>")Our old friend Shumer from the beginning of this article is saying this will change. It probably will, but for now, that’s the current state of things.

If I were working on a nonfiction book, I could imagine trying AI to help me develop an outline.

One thing I’ve thought about trying AI for is asking it to read my newest novel and list all the characters and their characteristics. And maybe develop a timeline of events. One of the things I have trouble with as a writer is, “What time is it in this story? Is it still Sunday night?”

I’ve thought about using AI to generate a new timeline for the novel to replace this old one (paywall-free link):

Or maybe replace the cast of characters, since the novel has changed substantially from when the original cast of characters was developed:

https://medium.com/restive-souls/cast-of-characters-in-restive-souls-86ef72cc9f16

Or use AI to look for inconsistencies in character behavior.

In other words, we don’t need to use AI to help us write. But can we use it to help us organize and avoid pitfalls? And, thus, turn us into better writers?

I even considered using AI to see if this post could be organized better (of course it can). But I didn’t want to deal with trying to redo the footnotes. So I didn’t.

These are things I’m thinking about.

If you’ve made it this far, wow, amazing. I barely did.

What are you thinking about? Can you think of ways to use AI in your line of work?

Final Thoughts

In the final analysis, I agree with Shumer. There isn’t a way out, even for the most furious anti-AI crusaders. I’ve been one of those. There are so many aspects of AI I dislike that I could write a 500-page book about it. But it would have no impact. The most I can hope for is that an AI company will pirate it and I can join another class action suit against an AI firm.

This isn’t quite the same as “If you can’t beat ‘em, join ‘em.”

It’s more about, “How can I take advantage of the situation?”

It’s fair to call that a selfish choice. Everyone must make their own decisions on how to deal with AI’s inevitability.

But I can’t in good conscience tell young people, who are much more affected by this than I ever will be, to turn their backs and run away from AI. That’s like telling them to sign up for a lifetime of poverty.

It may come to that for most of us anyway. But if so, what will the tech bros do with all those unhappy people?

Oh, right. I’ve written about that, too…

Notes

While researching this, I checked out quite a few different AI implementations.

One of those was Suno, a music app that surely musicians detest. On the surface, it seemed pretty good. But pedestrian. Could lyricists use it to help get a better understanding for the cadence of their song? Eh, maybe.

Pedestrian music is easy for AI because math lives deep inside music. But there’s no personality. There aren’t any riffs. Suno won’t be invited to a jam session with Daryl Hall. So I’ll leave you with one of those jam sessions instead, because it’s a place AI will never visit:

Thanks for reading! Leave a comment! The comment section is open for everyone.

Footnotes

Modeling technology refers to an underlying “model” that all AI tech currently uses. This model is based on massive amounts of information that the model has acquired somehow, sometimes illicitly, such as when Anthropic (Claude AI) downloaded pirated books. A model can be anything: a collection of math problems and solutions, algebraic functions, history lessons, maps, food recipes, software coding solutions, anything that can be considered retrievable information, especially if it’s on the internet. These models are slurped up by AI, which then creates complex pattern recognition routines to render output.

These models are referred to in the industry as “Large Language Models,” or LLMs for short.

A Command Line Interface (CLI) is a tool that all computers have that allow you to run commands for doing things like installing software and/or changing settings on your computer, among other things. CLIs have different names depending on the type of computer you use. I’m using a Mac, so it’s called “Terminal.” Linux has bash or shell or console, depending on the user’s preference and/or Linux flavor, and Windows has Windows Terminal, Command Prompt, and Windows PowerShell.

In this Mac Terminal session, I’ve installed Claude onto my computer…

In a future post, I’ll talk directly about my experiences with the tools.

Human augmentation itself isn’t new. I am wearing a pair of eyeglasses as I write this. What’s new is its scope.

Computer Aided Desgin (CAD) is software that allows users to design things on the computer, from boxes to shelf sets to cars.

Gerard, David. “Seedance’s ‘Generated’ AI Cruise-Pitt Demo Was a Green Screen and Face Swap.” Pivot to AI, February 18, 2026. https://pivot-to-ai.com/2026/02/18/seedances-generated-ai-cruise-pitt-demo-was-a-green-screen-and-face-swap/.

Aron Peterson. “SHOKUNIN STUDIO.” SHOKUNIN STUDIO, February 18, 2026. https://www.shokunin.studio/blog/2026/2/18/is-it-all-over-for-filmmakers.

It would be fair to ask, what makes a database any less probabilistic aside from accepted answers, and who makes those choices? But that’s an argument outside the scope of this article.

It’s dynamic because it can change through programming.

Mistry, Rohan. “The Mathematical Foundation of AI: What Everyone Misses.” Medium. Towards AI, October 29, 2025.

Ruminato Gift Link 🎁 https://pub.towardsai.net/the-mathematical-foundation-of-ai-what-everyone-misses-b1eedd27b60b. 🎁

And if so, the reason autocorrect works better than it used to is because it has built more complete proximity maps from which to draw samples and compare it to what you are typing.

There are plenty of open source AI models, but the most complex new iterations are highly secretive affairs. An open source project is a project that is “open” to all participants and, generally, freely available to the public.

Duffy, Clare. “The Big Wrinkle in the Multitrillion-Dollar AI Buildout.” CNN, December 19, 2025. https://edition.cnn.com/2025/12/19/tech/ai-chips-lifecycle-questions.

Weise, Karen. “Amazon’s $200 Billion Spending Plan Raises Stakes in A.I. Race.” Nytimes.com. The New York Times, February 5, 2026.

Ruminato Gift Link 🎁 https://www.nytimes.com/2026/02/05/technology/amazon-200-billion-ai.html 🎁

Worldometer. “GDP by Country (2025) - Worldometer,” 2025. https://www.worldometers.info/gdp/gdp-by-country/.

Lockett, Will. “AI Debt Is Spiralling out of Control.” Medium, March 2026.

Ruminato Gift Link 🎁 https://wlockett.medium.com/ai-debt-is-spiralling-out-of-control-bf0957767422. 🎁

Lockett, Will. Medium; ibid

Lockett, Will. Medium; ibid

Bacon, Auzinea. “Here’s How AI Data Centers Affect the Electrical Grid.” CNN, January 18, 2026. https://www.cnn.com/2026/01/18/business/ai-data-centers-electricity-prices.

Duffy, Clare. “‘There Are No Guardrails.’ This Mom Believes an AI Chatbot Is Responsible for Her Son’s Suicide.” CNN, October 30, 2024. https://www.cnn.com/2024/10/30/tech/teen-suicide-character-ai-lawsuit.

Young, Olivia. “Colorado Family Sues AI Chatbot Company after Daughter’s Suicide: ‘My Child Should Be Here.’” Cbsnews.com, October 3, 2025. https://www.cbsnews.com/colorado/news/lawsuit-characterai-chatbot-colorado-suicide/.

Reuters. “Why Are French Prosecutors Investigating Elon Musk’s X?” Reuters, February 3, 2026. https://www.reuters.com/technology/why-are-french-prosecutors-investigating-elon-musks-x-2026-02-03/.

From the New Yorker:

A couple of years ago, the company outgrew its old space and took over a turnkey lease from the messaging company Slack. It spruced up the place through the comprehensive removal of anything interesting to look at. Even this blankness is doled out grudgingly: all but two of the ten floors that the company occupies are off limits to outsiders. Access to the dark heart of the models is limited even further. Any unwitting move across the wrong transom, I quickly discovered, is instantly neutralized by sentinels in black.

Gideon Lewis-Kraus. “What Is Claude? Anthropic Doesn’t Know, Either.” The New Yorker, February 9, 2026. https://www.newyorker.com/magazine/2026/02/16/what-is-claude-anthropic-doesnt-know-either.

Alexios Mantzarlis. “AI Nudifiers Continue to Reach Millions and Make Millions.” Indicator, July 14, 2025. https://indicator.media/p/ai-nudifiers-continue-to-reach-millions-and-make-millions.

DiBenedetto, Chase. “1 in 6 Congresswomen Are Victims of AI-Generated Nonconsensual Intimate Imagery, according to New Report.” Mashable, December 15, 2024. https://mashable.com/article/nonconsensual-explicit-deepfakes-target-women-in-congress-more-than-men.

Newton, Casey. “What Is OpenAI Going to Do When the Truth Comes Out?” Platformer, March 3, 2026. https://www.platformer.news/openai-pentagon-surveillance-drones-backlash/?ref=ruminato.

Vance, Ashlee. “Exclusive: OpenAI and Sam Altman Back a Bold New Take on Fusing Humans and Machines.” Corememory.com. Core Memory , January 15, 2026. https://www.corememory.com/p/exclusive-openai-and-sam-altman-back-merge-labs-bci

Merge.io. “Merge Labs,” 2026. https://merge.io/blog.

Most computer languages have what we call “libraries,” which can be used to save time writing code. JQuery, for example, lets you use a browser’s computer language, “JavaScript,” to more easily identify and manipulate parts of a page with just a few lines of code, and prevents you from re-inventing the wheel for every task you want a web page to perform.

Bootstrap and other libraries allow developers to quickly establish how a web page appears to a user by encapsulating chunks of a markup language called Cascading Style Sheets (CSS).

An open source project is a project that is “open” to all participants and, generally, freely available to the public. If you’re a user, you can usually download the finished product from a website.

For example, you don’t need to buy Microsoft Office if you want a word processor. You can just download Open Office, which is managed by one of the most reliable open source software distributors in the world, Apache. The Apache Foundation, back in the day, made most of the world’s web server software.

Open Office even creates .docx documents, the most current version of word, because Microsoft created a hairy implementation of XML, another open source language, to render Microsoft Word documents with.

Open Office is possible because developers volunteer their time to write code for it, and submit to an “open source” repository for fellow developers to review and approve. There’s even a bug submission process, also available to the public.

This is how most open source software projects work, and there is open source projects for almost anything you can imagine.

Ruby on Rails Guides. “Getting Started with Rails — Ruby on Rails Guides,” 2025. https://guides.rubyonrails.org/getting_started.html.

Best explanation of AI I've found, although few people understand what the dependence on probabilities implies: there will always be some hallucinations, but the frequency will decline. As an ancient programmer, no longer active, I have a concern regarding AI-written code and the potential propagation of either a subtle error or a sneaky backdoor. Those kinds of bias are easy to inject if you're in control of the AI system and its default behavior, and they're devilishly difficult to detect when you use AI to conceal them. I suspect we'll eventually have an entire hierarchy of AI systems policing other AI systems, roughly the equivalent of the Hawtch-Hawtcher Bee Watcher Bee Watcher Watcher (thank you, Dr. Seuss).

Oh, and please do reprogram Humpty's Qatari AF1! Might as well do Noem's Mile High Clubjet while you're at it.